Material Classification based on Training Data Synthesized Using a BTF Database

Michael Weinmann, Juergen Gall, and Reinhard Klein

Abstract

To cope with the richness in appearance variation found in real-world data under natural illumination, we propose to synthesize training data capturing these variations for material classification. Using synthetic training data created from separately acquired material and illumination characteristics allows to overcome the problems of existing material databases which only include a tiny fraction of the possible real-world conditions under controlled laboratory environments. However, it is essential to utilize a representation for material appearance which preserves fine details in the reflectance behavior of the digitized materials. As BRDFs are not sufficient for many materials due to the lack of modeling mesoscopic effects, we present a high-quality BTF database with 22;801 densely measured view-light configurations including surface geometry measurements for each of the 84 measured material samples. This representation is used to generate a database of synthesized images depicting the materials under different view-light conditions with their characteristic surface geometry using image-based lighting to simulate the complexity of real-world scenarios. We demonstrate that our synthesized data allows classifying materials under complex real-world scenarios.

Images

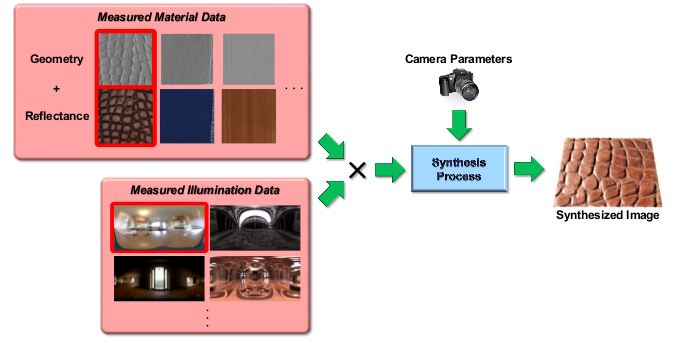

Synthesis of representative training data: The full Cartesian product of material data and environment lighting can easily be rendered by using a virtual camera with specified extrinsic and intrinsic parameters. The illustrated output image is generated using the material and illumination configuration highlighted in red.

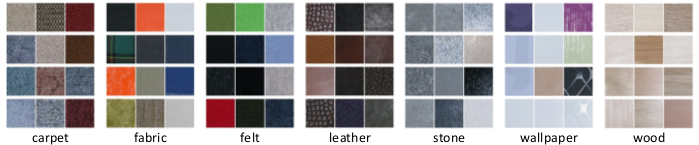

Representative images for the material samples in the 7 categories.

Data

If you have questions concerning the data, please contact Michael Weinmann.

Publications

Weinmann M., Gall J., and Klein R., Material Classification based on Training Data Synthesized Using a BTF Database (PDF), European Conference on Computer Vision (ECCV'14), Springer, LNCS 8691, 156-171, 2014. ©Springer-Verlag